Chapter 3: The Value of Touch — Why Force Information Is Essential

Summary

Adding tactile information consistently improves robot manipulation performance: OSMO [#18] +16%p, VTDexManip +20%, ForceVLA [#1] +23.2%p, UMI-FT [#36] +76%p. The counter-intuitive finding that broad-coverage simple sensors outperform narrow high-resolution ones (Ye et al. binary touch, 85% on seen objects) justifies TacGlove [#26]'s "8 sensors, 3-axis" design. This chapter analyzes the quantitative value of tactile addition, sensor selection trade-offs, and the Embodiment Bridge concept.

3.1 Introduction: Is Vision Sufficient?

As Chapter 2 confirmed, current large-scale human data collection (EgoDex, Ego4D, BuildAI) and most learning pipelines (EgoMimic, EgoScale, AoE) rely exclusively on visual information. This approach has achieved substantial results — EgoScale demonstrated +54% improvement and a log-linear scaling law using 20,854 hours of visual data alone.

However, certain questions cannot be answered by vision alone: "How firmly should this object be grasped?" "Is it slipping?" "Is the surface rigid or compliant?" Without this information, robots systematically fail at contact-rich tasks.

Quantitative evidence: EgoZero [5] achieved 70% average success across 7 tasks using vision alone, far short of the 95%+ required for industrial applications. AoE [6] recorded only 20% on the Push Bowl & Pour Seed task with vision-only co-training — the clearest case of vision's limitations in contact-rich settings.

3.2 Quantitative Value of Tactile Addition

OSMO: +16%p (Real-World, Tactile Glove)

OSMO [1] [#18] is the only large-scale study that directly compared performance across modality combinations under identical experimental conditions.

| Modality Combination | Success Rate | Std Dev |

|---|---|---|

| Proprioception only | 27.12% | ±32.38% |

| Vision + proprioception | 55.75% | ±30.01% |

| Tactile + vision + proprioception | 71.69% | ±27.43% |

Tactile addition effect: +15.94%p (vs vision+proprio). Simultaneously, standard deviation decreased from 30.01% to 27.43%, showing that tactile contributes to stability as well as performance.

This result was achieved on a single task (wiping) with 140 demos (~2 hours), so generalizability requires further validation. Wiping demands sustained contact pressure, an inherently tactile-favorable condition. Whether the same effect appears on simpler tasks like pick-and-place remains unverified.

VTDexManip: +20% per Modality (Simulation, Binary)

VTDexManip [5] isolated per-modality contributions across 6 dexterous manipulation RL benchmarks:

- Vision only → baseline

- + Binary tactile → +~20%

- + Joint visual-tactile pretraining → additional +~20%

The key finding is that even binary (contact/no-contact) tactile information yields meaningful improvement. This suggests that tactile value does not reside solely in high-resolution force measurement.

Limitation: simulation-based results; real-world reproduction requires separate validation.

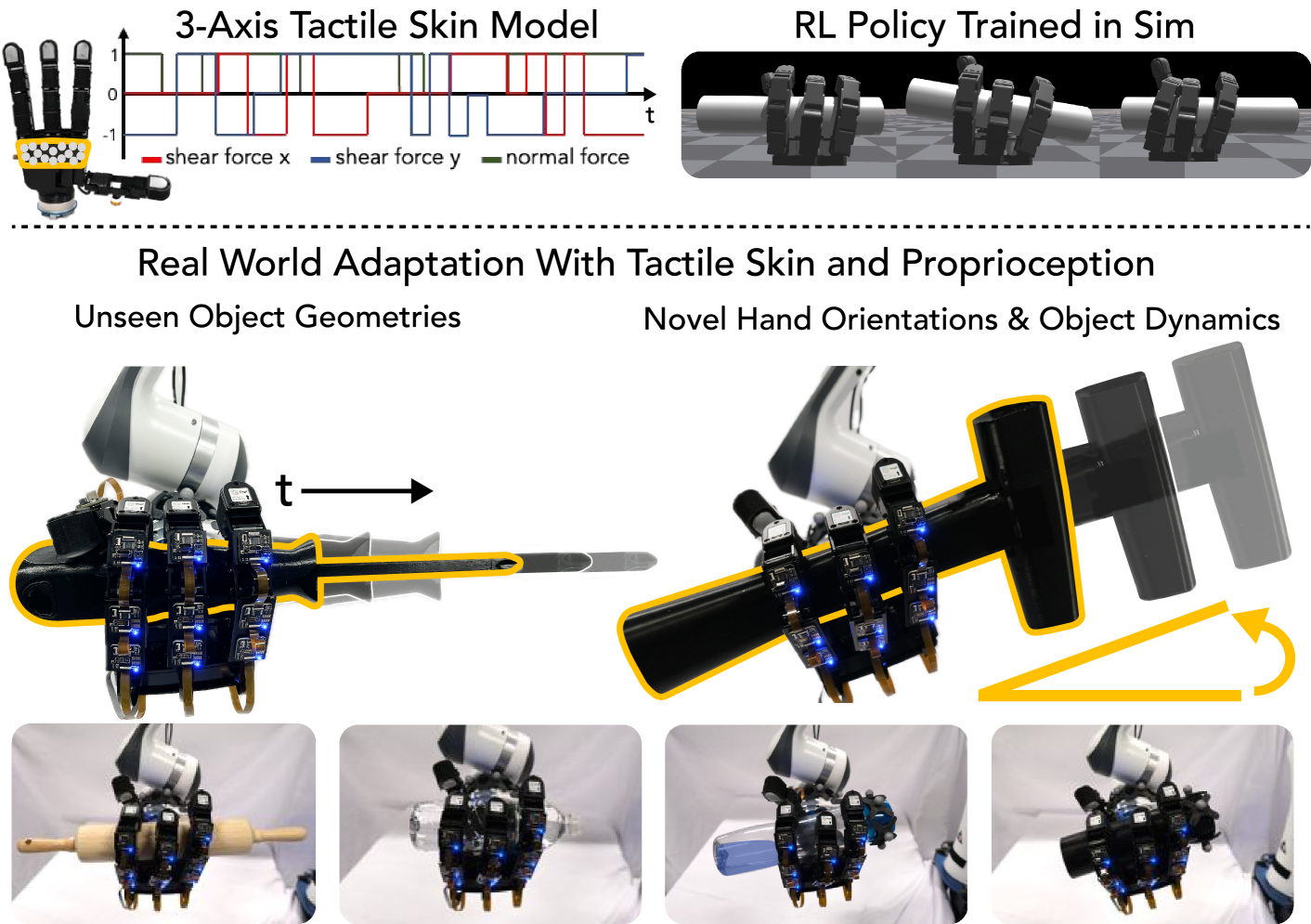

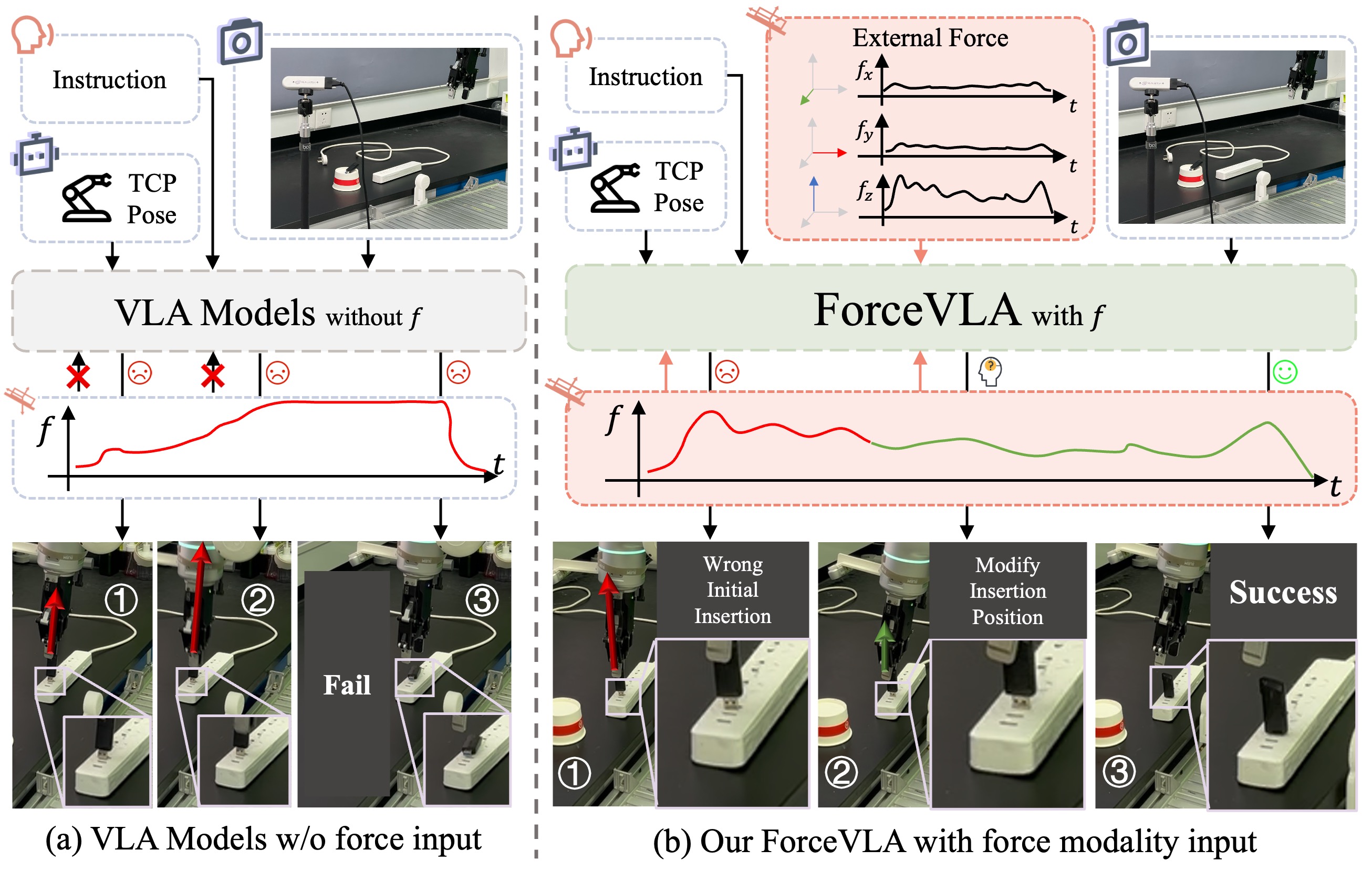

ForceVLA: +23.2%p (Vision-Based Force Estimation)

ForceVLA [#1] took an indirect approach, estimating forces from vision rather than direct tactile sensing. Integrating vision-based force estimation into a VLA yielded +23.2%p improvement. This result demonstrates "the value of force information itself" independent of methodology — if estimated values yield significant gains, the potential value of direct tactile sensors is even greater.

DexUMI: Grasping Fails Without Tactile

DexUMI's [4] [#8] ablation provides the most direct evidence for tactile necessity. Policies with tactile sensors succeeded at grasping; removing tactile caused failure. This is the only real-world result demonstrating tactile necessity in wearable capture systems.

Ye et al.: 85% with Binary Touch (Science Robotics, 2026)

Ye et al.[3] achieved 85% on seen tasks and 73% on all tasks using only binary touch sensors and a standard webcam on the LEAP Hand. Visual-tactile self-supervised pretraining was the key technique, with improvements in learning efficiency, sim-to-real gap reduction, and generalization to unseen tasks.

UMI-FT: 0%→92% (Real-World, Wrist F/T)

UMI-FT [10] [#36] provides the most dramatic demonstration of tactile addition's impact. On whiteboard wiping, position control (DP) alone achieved 16%, while adding force sensing + adaptive compliance (ACP) raised it to 92% — a +76%p improvement. On light bulb insertion, performance went from 0%→95%, making a completely impossible task feasible with tactile. Using only one CoinFT 6-axis F/T sensor per finger (2 total), this result proves that even minimal force sensing can determine success or failure on contact-rich tasks.

EquiTac: 90% from 10 Demos (Equivariant Tactile)

EquiTac [11] [#37] exploited the SO(2)-equivariant structure of GelSight surface normal maps to achieve 90% success from only 10 demonstrations (baseline: 75% maximum from 60 demonstrations). This dramatic improvement in sample efficiency through geometric symmetry of tactile data shows that tactile contributes not only to raw performance but also to data efficiency.

Consolidated Comparison

| Study | Tactile Type | Improvement | Setting | Limitation |

|---|---|---|---|---|

| OSMO | 3-axis magnetic | +16%p | Real, 1 task | Small scale, single task |

| VTDexManip | Binary piezoresistive | +20%/modality | Sim | No real validation |

| ForceVLA | Vision-based estimation | +23.2%p | Sim/Lab | Indirect estimation |

| DexUMI | Integrated sensors | Essential (fails without) | Real | 4 tasks |

| Ye et al. | Binary touch | 85% (seen objects) | Real | LEAP Hand only |

| UMI-FT | Wrist 6-axis F/T | +76%p (0%→92%) | Real | 2-finger gripper |

| EquiTac | GelSight SO(2)-equivariant | 10 demos→90% | Real | Single task |

3.3 Broad Coverage vs High Resolution

A central trade-off in tactile sensor design is sensor density (resolution) vs spatial coverage. Human fingertips contain approximately 2,500 mechanoreceptors/cm², but replicating this in a glove is currently infeasible.

Ye et al.'s [2026] results provide a clear answer to this trade-off: binary touch (contact/no-contact) alone achieves 85% on seen objects (73% overall). This means GelSight-level high-resolution tactile (hundreds to thousands of taxels) is not strictly necessary.

Across the current results, a resolution spectrum emerges: fingertip multi-axis (EquiTac/TacCap) → whole-hand moderate-resolution (OSMO/TacGlove) → wrist-level aggregate (UMI-FT). UMI-FT's CoinFT uses just one 6-axis F/T sensor per finger (2 total), the lowest resolution on this spectrum, yet achieved a dramatic 0%→92% effect on contact-rich tasks. Synthesizing OSMO's +16%p from 12 three-axis sensors and Ye et al.'s 85% from binary sensors, a design principle emerges: "broad-coverage moderate-resolution sensors > narrow high-resolution sensors."

How important is the "fingertips + palm" distribution itself? [12] quantified this on a DRL-based in-hand reorientation benchmark: the tactile contribution of upper-palm locations (27.87 consecutive successes) is essentially on par with fingertip distal phalanges (28.21), and for small objects the configuration using all five palm locations achieves the highest score of any configuration. Adding tactile channels to a vision-only baseline yielded +26% success rate with 3× faster convergence. The long-standing intuition that "palms are auxiliary and fingertips are primary" is therefore quantitatively rejected — palm locations are at least as useful as fingertips. Sparsh-skin [13]'s ablation (removing palm pads drops pose estimation by 10+ percentage points) points in the same direction.

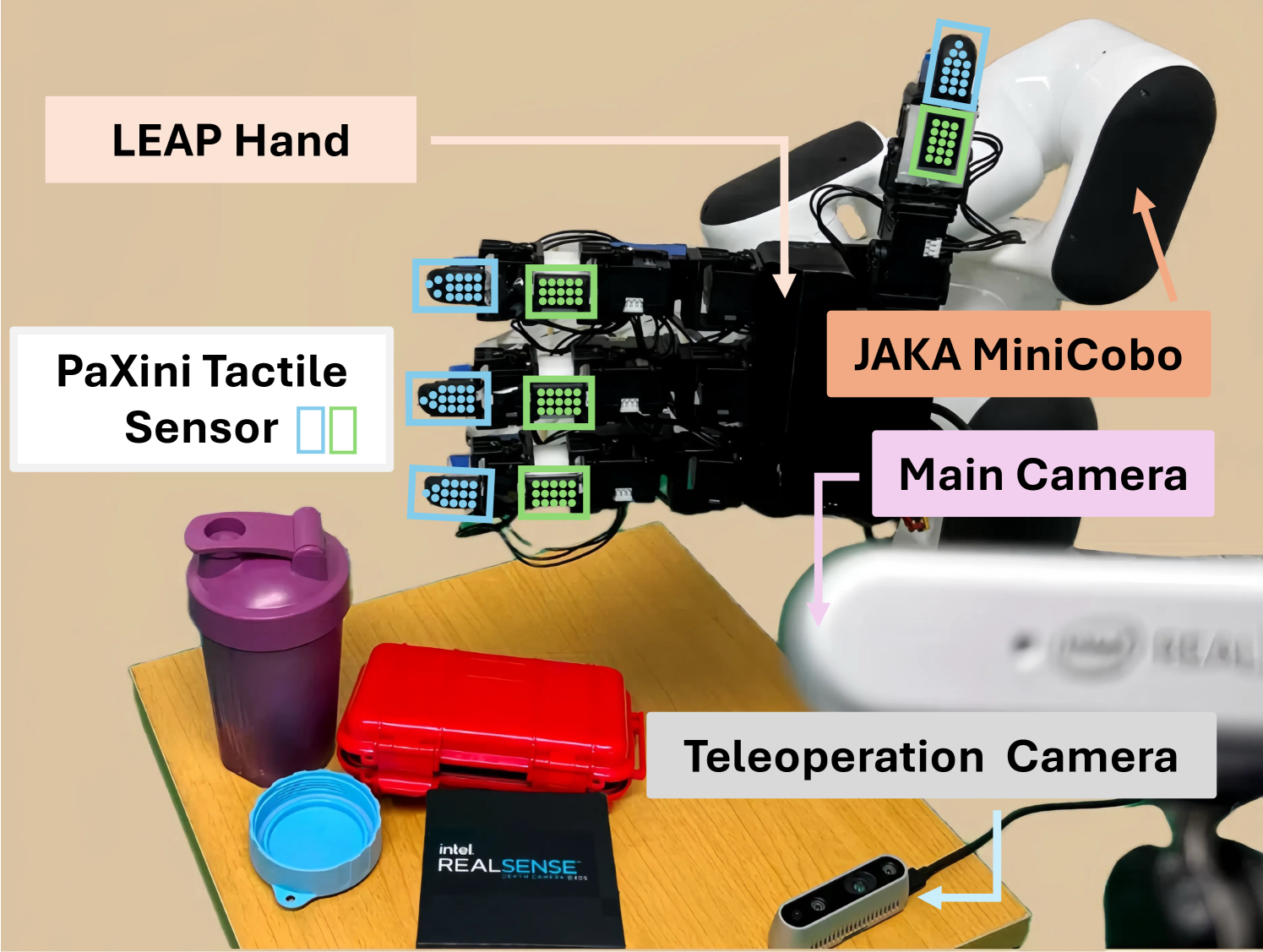

This principle directly justifies TacGlove [#26]'s design: 8 three-axis magnetic sensors distributed across fingers and palm for whole-hand coverage, with individual sensor resolution kept at a moderate level. Twenty-four channels (8 sensors × 3 axes) of continuous force data are richer than binary while more practical than high-density arrays. The 5 fingertips + 3 palm sections (thenar/hypothenar/central) layout directly aligns with Almeida et al.'s finding that palm locations dominate for small objects.

An important open question remains: Do 3-axis continuous forces significantly outperform binary for industrial tasks (capping, assembly)? Capping requires precise torque and normal force control, which may exceed binary information capacity. This question is among TacTeleOp's essential ablation experiments (Chapter 10).

3.4 Embodiment Bridge: The Gap That Tactile Resolves

OSMO's [1] Embodiment Bridge extends tactile value beyond performance improvement. When the same physical tactile glove is placed on both human and robot:

- Physical unification of tactile space: Contact patterns felt by human and robot originate from the same sensor, physically resolving the tactile cross-embodiment gap.

- Partial resolution of visual gap: The glove partially unifies the appearance of both sides.

- Simplified data alignment: Human and robot data are generated in the same sensor space, eliminating the need for complex domain adaptation.

However, OSMO's Embodiment Bridge is passive — it aligns data but does not enable the robot to actively learn the gap. TacPlay [#27] extends this to active learning: setting human tactile patterns as "targets" and having the robot explore, through autonomous play, how to reproduce them with its own kinematics (Chapter 9).

3.5 Sensor Selection Guide

Based on this survey's analysis, the criteria for tactile sensor selection are:

| Criterion | Recommendation | Rationale |

|---|---|---|

| Axes | 3-axis (normal + shear) | Slip detection impossible without shear |

| Coverage | Whole-hand (fingertips + palm) | Ye et al.: coverage > resolution. Sparsh-skin ablation: removing palm drops pose estimation by 10+ pp |

| Human/robot shared | Required | OSMO Embodiment Bridge |

| Durability | Washable, shift-changeable | 8-hour continuous factory operation |

| Cost | <$1,000/glove | Competitive with AirExo $600 |

| EMI resistance | Shielding if needed | Factory electromagnetic interference |

Magnetic sensors (OSMO-style) satisfy most of these criteria. OSMO's design with MuMetal shielding and dual magnetometer differential sensing reducing noise by 57% should be referenced, while factory electromagnetic interference requires pre-measurement and additional shielding.

3.6 Closed-Loop Control via Palm Tactile Signals

Most of the evidence so far frames tactile as an observation channel that boosts policy performance. Recent work pushes one step further, showing that palm tactile signals themselves can act as closed-loop control triggers that directly drive actuation.

[14]'s TacPalm SoftHand embeds a 1280×800 micro-camera-based vision-based tactile sensor into the palm of a soft robotic hand, achieving an effective density of 181,000 units/cm² — roughly 750× the mechanoreceptor density of human skin. The Indentation Contour Area (ICA) computed from the palm image is used as a threshold: when the ICA exceeds a preset value, finger pneumatic inflation is automatically triggered, closing the grasp loop from the palm alone without external vision or a higher-level planner. The palm directly decides "has the object settled in the hand?" and immediately contracts the fingers — the first large-scale hardware instantiation where tactile is promoted from observation to action trigger.

System-level corroboration comes from [15]'s F-TAC Hand. With 70% palmar coverage at 0.1 mm resolution, multi-object delivery adaptation rises from 53.5% (tactile absent) to nearly 100% when the high-resolution palm tactile channel is active. The gap is not a performance tweak; it establishes the existence of loop closure — palm contact signals directly feed collision avoidance and re-placement decisions.

The implication for TacTeleOp and TacPlay is substantial: once a tactile channel is present, the robot can behave as a reactive actor rather than a passive learner (Chapter 8, Chapter 9). TacGlove's 24-channel continuous force stream is rich enough to serve as input for such closed-loop policies, and the palm-side thenar/hypothenar/central layout recreates, at the hardware level, the "palm = decision point" principle demonstrated by TacPalm and F-TAC.

3.7 Key Discussion: Is Tactile Truly Essential?

Counter-arguments to tactile value exist:

- "AirExo-2 achieved teleop-level without tactile": This is partially valid, but AirExo-2 is limited to arm-level gripper tasks. As DexUMI's ablation shows, grasping fails without tactile on dexterous hand + contact-rich tasks. Task complexity determines tactile necessity.

- "Emergent alignment at pi0 scale may resolve tactile gap": Emergent alignment observed in pi0 [8] [#2] was confirmed only for the visual modality; tactile emergent alignment is unexplored. More importantly, pi0 requires industrial-scale compute, infeasible for academic labs.

- "Binary touch at 85% makes 3-axis sensors redundant": Ye et al.'s 85% is for seen tasks in lab settings. Industrial processes requiring precise torque control (capping) or force magnitude discrimination (snap-fit assembly) may exceed binary information capacity. This is a hypothesis requiring validation through TacTeleOp's 3-axis vs binary ablation.

Conclusion: Tactile necessity is task-dependent. For simple pick-and-place, vision alone may suffice. But for contact-rich industrial tasks, tactile is the key modality that elevates the 70% ceiling to 95%+.

3.8 Connection to Our Direction

TacGlove's tactile addition is justified at three levels:

- Performance improvement: Consistent evidence from OSMO +16%p, VTDexManip +20%, DexUMI "essential"

- Embodiment Bridge: Physical resolution of human-robot gap via shared glove (Chapter 6, Chapter 9)

- Scaling law exploration: Tactile data scaling characteristics remain an open question no one has verified (Chapter 10)

Synthesizing Part I's three chapters: teleoperation has structural bottlenecks (Chapter 1), alternative collection hardware lacks tactile (Chapter 2), and tactile is essential for contact-rich tasks (Chapter 3). This is the starting point for TacGlove/TacTeleOp/TacPlay. Part II analyzes the methodologies for converting collected human data into robot policies (Chapter 4).

References

- Yin, J., et al. (2025). OSMO: A Large-Scale Tactile Glove for Human-to-Robot Manipulation Transfer. arXiv. https://arxiv.org/abs/2512.08920 #18 scholar

- Liu, Q., et al. (2025). VTDexManip: Visual-Tactile Dexterous Manipulation Dataset and Benchmark. ICLR 2025. scholar

- Ye, Q., et al. (2026). Visual-Tactile Learning for Dexterous Manipulation. Science Robotics. scholar

- Xu, M., et al. (2025). DexUMI: Universal Manipulation Interface for Dexterous Hands. arXiv. #8 scholar

- Liu, V., et al. (2025). EgoZero: Robot Policy Learning from Egocentric Video without Robot Data. arXiv. scholar

- Yang, B., et al. (2026). AoE: Always-on Egocentric Data Collection for Robot Learning. arXiv. scholar

- Zheng, R., et al. (2026). EgoScale: Egocentric Video Pretraining for Scalable Robot Learning. arXiv. scholar

- Physical Intelligence (2025). pi0: A General-Purpose Robot Policy. arXiv. #2 scholar

- Park, M., & Park, Y.-L. et al. (2024). Stretchable Glove for Hand Motion Estimation. Nature Communications. #6 scholar

- Kim, T., et al. (2026). UMI-FT: Compliant Manipulation via Universal Manipulation Interface with Force/Torque Sensing. arXiv. #36 scholar

- Kim, M., et al. (2025). EquiTac: Tactile Equivariance for Efficient Manipulation Learning. arXiv. #37 scholar

- Almeida, J. D., Falotico, E., Laschi, C., & Santos-Victor, J. (2025). The Role of Touch: Towards Optimal Tactile Sensing Distribution in Anthropomorphic Hands for Dexterous In-Hand Manipulation. IEEE ICNSC 2025. https://arxiv.org/abs/2509.14984 #41 scholar

- Sharma, A., et al. (2025). Self-supervised perception for tactile skin covered dexterous hands (Sparsh-skin). arXiv. https://arxiv.org/abs/2505.11420 scholar

- Zhang, N., Ren, J., Dong, Y., Gu, G., & Zhu, X. (2025). Soft Robotic Hand with Tactile Palm-Finger Coordination (TacPalm SoftHand). Nature Communications 16:2395. https://doi.org/10.1038/s41467-025-57741-6 #40 scholar

- Zhao, Z., et al. (2025). Embedding high-resolution touch across robotic hands enables adaptive human-like grasping (F-TAC Hand). Nature Machine Intelligence. https://arxiv.org/abs/2412.14482 #39 scholar