Chapter 4: Can Human Data Alone Suffice? — Teleop-Free Approaches

Summary

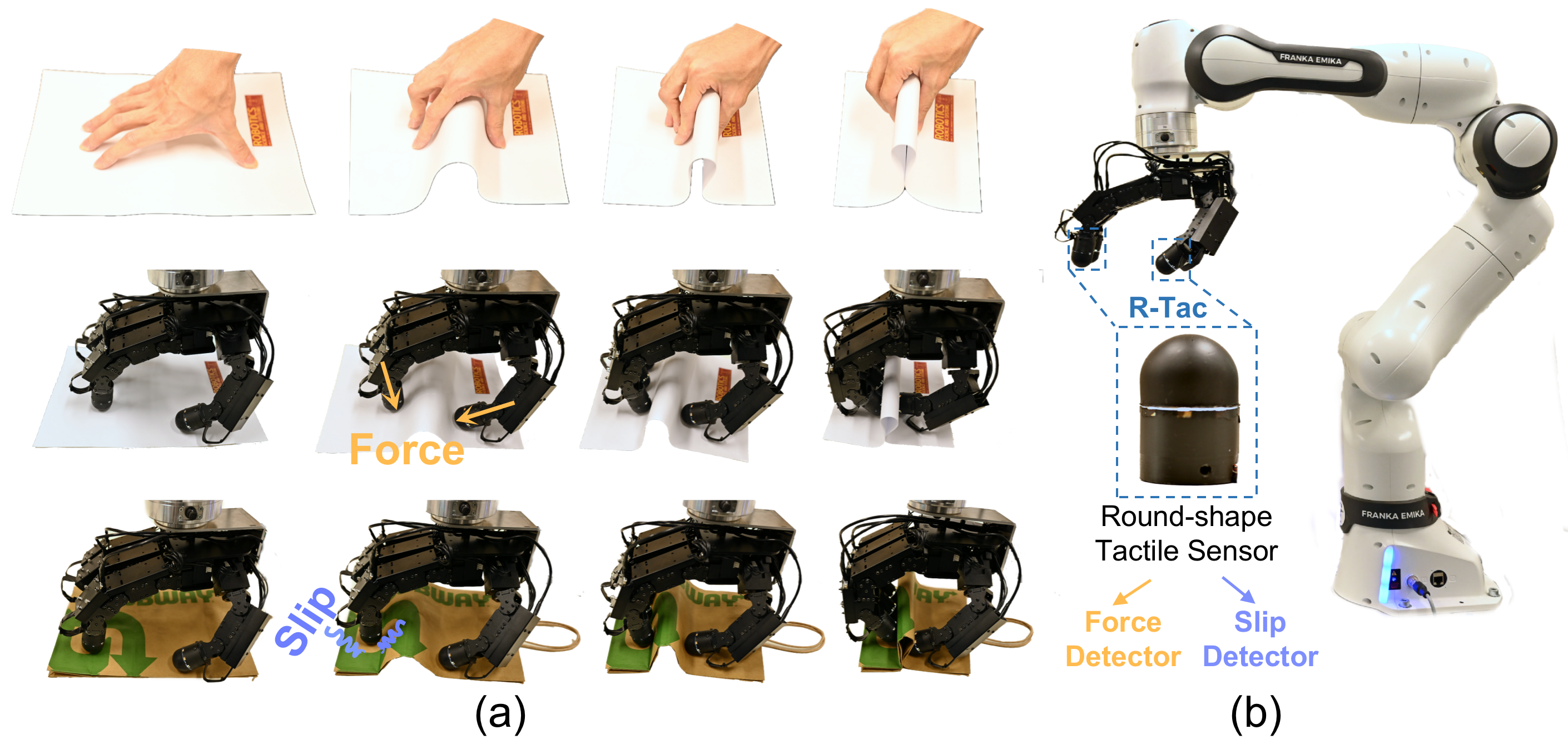

Starting in 2025, learning robot manipulation policies from human data alone (zero robot data) became possible. X-Sim achieves this with a single RGBD video, Human2Sim2Robot with a single demonstration, and EgoZero with smart glasses only. However, these approaches exhibit systematic limitations on contact-rich tasks, with validated task ranges limited to 5–13. These limitations strengthen the case for Data A + Data B co-training (Chapter 5) and tactile information (Chapter 3).

4.1 Introduction

"Can Data B alone enable robot control?" corresponds to TacTeleOp [#26]'s Hypothesis H1. Until 2024, the answer was largely negative — the embodiment gap (kinematic, visual, dynamic differences between human and robot) was considered too large for direct transfer. In 2025, a series of studies overturned this assumption.

4.2 Real-to-Sim-to-Real: Routing Through Simulation

X-Sim (Cornell, CoRL 2025 Oral)

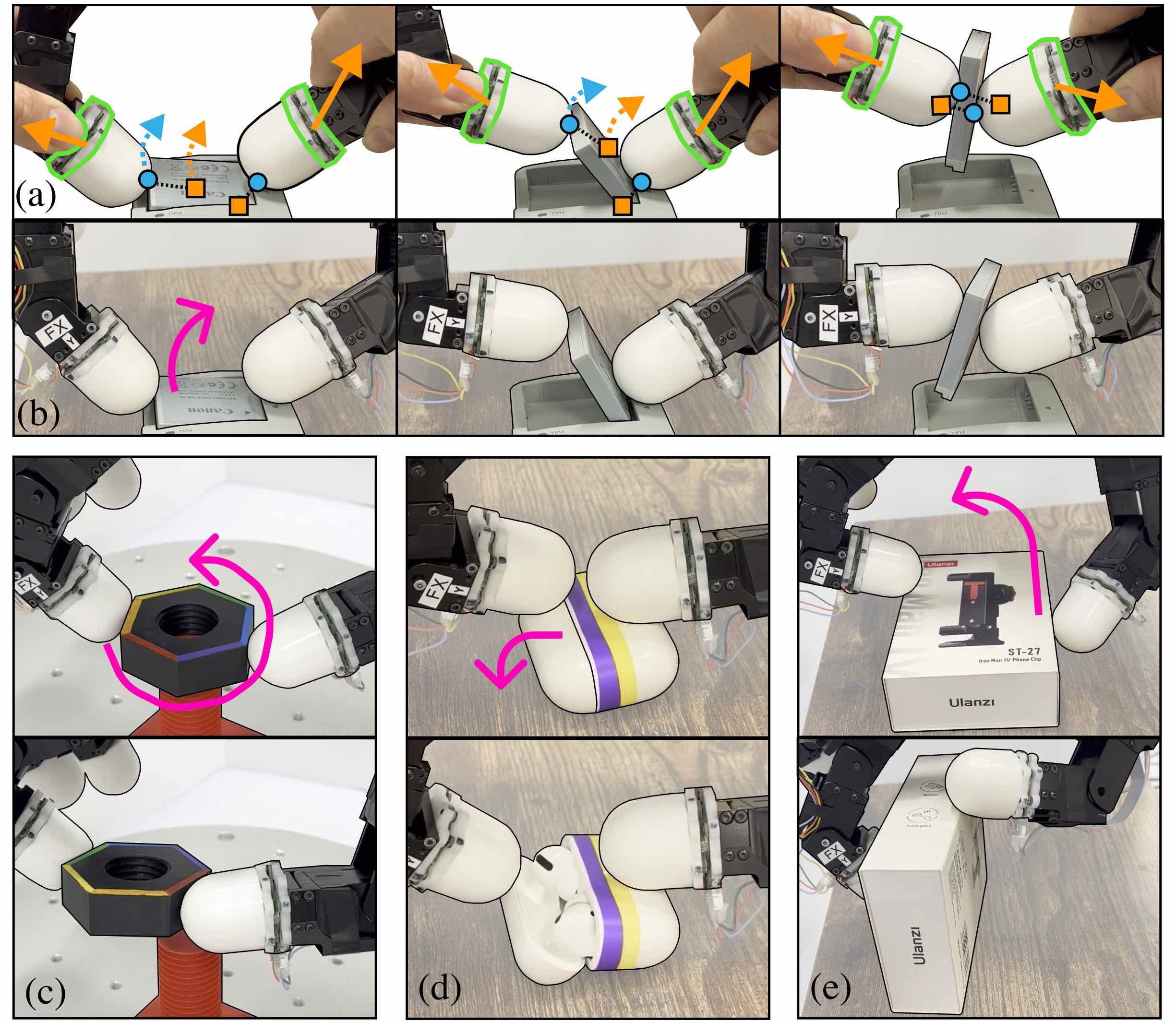

X-Sim [1] presents a 3-stage pipeline starting from a single human RGBD video: (1) photorealistic simulation reconstruction + object trajectory extraction, (2) RL training in simulation using object trajectory as an embodiment-agnostic reward, (3) synthetic rollout generation across diverse viewpoints/lighting followed by distillation into a diffusion policy.

| Metric | Value |

|---|---|

| Task progress improvement | +30% (vs baselines) |

| Data collection time | 10× reduction vs BC |

| Input | 1 human RGBD video |

| Robot data | 0 |

X-Sim's key insight is using object trajectory as reward. How the human moved the object (object-centric representation) is embodiment-agnostic, functioning as a reward signal regardless of human-robot kinematic differences.

Limitations: (1) RGBD input required (less accessible than RGB-only). (2) Only 5 tasks validated, no contact-rich tasks (screwing, etc.). (3) Object-centric reward may be weak for tool use or deformable objects. (4) Gripper-based, dexterous hand unvalidated.

Human2Sim2Robot (Stanford, CoRL 2025)

Human2Sim2Robot [2] learned dexterous hand (Allegro) policies from a single human RGB-D video. It uses object 6D pose trajectory as dense reward and initializes RL exploration with pre-manipulation hand pose.

| vs Baseline | Improvement |

|---|---|

| vs Replay | +67% |

| vs Object-Aware Replay | +55% |

| vs BC (data augmentation) | +68% |

Evaluated on 7 real-world tasks (KUKA + Allegro Hand) with 10 rollouts each. Ablation confirmed object pose trajectory is more stable than hand tracking reward — hand tracking propagates pose estimation errors.

Author-stated limitations: (1) KUKA + Allegro only. (2) Rigid objects only. (3) Pose estimation ambiguity for symmetric/reflective objects. (4) Single-object, single-task policies. (5) Digital twin reconstruction required per environment.

TacPlay [#27] connection: The authors' acknowledged limitation of "pose estimation noise" is precisely where tactile can complement. Direct tactile measurement at the contact surface bypasses occlusion and noise in vision-based pose estimation.

4.3 Zero Robot Data: Direct Transfer Without Simulation

EgoZero (NYU/Berkeley, 2025)

EgoZero [3] collected egocentric human demonstrations from Project Aria smart glasses and learned manipulation policies with zero robot data. The key is an egocentric 3D point-based unified state-action space that abstracts kinematic differences between human and robot into a morphology-agnostic representation.

| Metric | Value |

|---|---|

| Success rate (7-task avg) | 70% |

| Collection time per task | 20 min |

| Robot data | 0 |

EgoZero's 70% shows that human data alone can reach a substantial level, while simultaneously meaning 30% failure exists. The gap to industrial requirements (95%+) of 25%p defines the space for tactile and co-training.

Limitations: Franka Panda gripper only, no dexterous hand, Project Aria (Meta proprietary) dependency, complete absence of tactile/force information.

VidBot (TU Munich/ETH, CVPR 2025)

VidBot [4] learns 3D affordances from in-the-wild monocular RGB human videos. It reconstructs metric-scale 3D hand trajectories via depth foundation model + structure-from-motion and generates fine-grained interaction trajectories with a diffusion model. It reported approximately +20% improvement over existing methods across 13 tasks.

The ability to achieve zero-shot transfer from RGB alone is notable, but the complete absence of force information suggests a ceiling on contact-rich tasks.

4.4 Pretraining-Based Approaches

LAPA (ICLR 2025)

LAPA [5] learns discrete latent actions via VQ-VAE between frames, uses these for VLA pretraining, then fine-tunes with small amounts of robot data.

| Experiment | LAPA | OpenVLA | Difference |

|---|---|---|---|

| Real-world avg | 50.1% | 43.9% | +6.2%p |

| Unseen objects | 57.8% | 46.2% | +11.6%p |

| Compute cost | 272 H100-hr | 21,500 A100-hr | ~30× efficiency |

LAPA's conclusion that "human video pretraining is more efficient than robot data" directly supports TacTeleOp's B pretrain → A fine-tune structure. However, weak cross-environment generalization (Language Table 33.6% vs ActionVLA 64.8%) and limitations on fine-grained motions like grasping were reported.

VideoDex (CMU, CoRL 2023 / IJRR 2024)

VideoDex [Shaw et al., 2023/2024] extracted hand motions from internet videos, retargeted them to LEAP Hand, and learned via pretrained visual embeddings + Neural Dynamical Policies. A pioneer of the "human video → robot dexterous policy" paradigm, but requiring 120–175 demos per task for fine-tuning.

4.5 Comparative Analysis: State of Teleop-Free Approaches

| Paper | Method | Input | Robot Data | Key Result | Contact-rich |

|---|---|---|---|---|---|

| X-Sim | Sim RL + object reward | 1 RGBD | 0 | +30% | Unvalidated |

| Human2Sim2Robot | Sim RL + pose reward | 1 RGB-D | 0 | +55–68% | Partial |

| EgoZero | 3D point policy | Aria glasses | 0 | 70% | Unvalidated |

| VidBot | 3D affordance | Monocular RGB | 0 | +20% | Unvalidated |

| LAPA | Latent action pretrain | Internet video | Minimal | 30× efficiency | Weak |

| VideoDex | Retarget + pretrain | Internet video | 120–175/task | Pretrain effect | Unvalidated |

| UMI | Handheld gripper | Diffusion Policy | 0 robot data | Cup 100%, tossing 87.5%, outdoor 71.7% | No |

| ACT-1 | Skill Capture Glove | Skill Transform+model | 0 robot data | 33 manipulation types, Airbnb zero-shot | No |

Pattern Analysis

- 2025 as the inflection point: All zero-robot-data studies concentrate in 2025.

- Vision-centric rewards dominate: Object trajectory (X-Sim), object pose (Human2Sim2Robot), video similarity (Human2Bot) — all vision-based.

- Complete absence of tactile: Including UMI and ACT-1, none of these approaches use tactile information.

- Gripper bias: EgoZero, X-Sim, VidBot, and UMI are gripper-based. Only Human2Sim2Robot and VideoDex use dexterous hands. ACT-1 uses a dexterous hand but depends on Skill Transform.

- Contact-rich unvalidated: Screw driving, capping, and precision assembly are largely absent.

4.6 Key Discussion: The Contact-Rich Wall

Teleop-free achievements are impressive, but a systematic gap exists for contact-rich tasks. The causes are clear:

- Visual reward limitations: Object trajectories and poses do not capture force distributions at contact surfaces. In capping, even if the object is correctly positioned, insufficient torque causes failure — visual rewards cannot distinguish this failure mode.

- Amplified sim-to-real gap: Contact dynamics is the least accurate domain in simulation. Friction, deformation, and surface condition errors are maximized in contact-rich tasks.

- Pose estimation limitations: As Human2Sim2Robot's authors acknowledged, occlusion at contact surfaces and pose estimation errors for reflective/transparent objects are particularly severe for contact-rich tasks.

UMI [8] [#35] achieved high success rates on non-contact-rich tasks such as cup arrangement (100%), but lacking tactile sensors, its extension to contact-rich tasks remains unvalidated. ACT-1 [9] [#29]'s espresso extraction demo suggests contact-rich potential, but without systematic benchmarks, quantitative evaluation is impossible. The 10% failure cases behind Skill Transform's 90% success rate also remain undisclosed.

From a deployment perspective, Habilis-β [10] [#33] proposed deployment metrics — TPH (tasks per hour) and MTBI (mean time between interventions) — evaluating sustained operational efficiency rather than single-episode success rates. It reported 4.75× productivity improvement over π0.5 in simulation. This reminds us that sustainable operational viability, not just contact-rich success rates, is a critical evaluation axis for real deployment.

This analysis yields two paths:

4.7 Connection to Our Direction

Teleop-free studies provide three implications for TacGlove/TacTeleOp/TacPlay:

- Data B's baseline value confirmed: X-Sim, EgoZero, and VidBot showed meaningful policies can be learned from human data alone. The premise that TacTeleOp's Data B collection has potential value is confirmed.

- 70% ceiling exists: EgoZero's 70% suggests a practical upper bound for vision-only Data B. Breaking this ceiling requires tactile (Chapter 3) and co-training (Chapter 5).

- Tactile reward opportunity: All approaches including UMI and ACT-1 rely on visual rewards; tactile rewards have not even been attempted. TacPlay's "tactile-target RL" occupies this empty space.

The next chapter analyzes co-training approaches that overcome Data B-only limitations through combination with Data A (Chapter 5).

References

- Dan, P., et al. (2025). X-Sim: Cross-Embodiment Simulation for Robot Learning. CoRL 2025 Oral. https://portal-cornell.github.io/X-Sim/ scholar

- Lum, T. G. W., et al. (2025). Human2Sim2Robot: Dexterous Manipulation Transfer via Simulation. CoRL 2025. scholar

- Liu, V., et al. (2025). EgoZero: Robot Policy Learning from Egocentric Video without Robot Data. arXiv. scholar

- Chen, H., et al. (2025). VidBot: Learning Robot Manipulation from Internet Videos. CVPR 2025. scholar

- Ye, S., et al. (2025). LAPA: Latent Action Pretraining from Videos. ICLR 2025. scholar

- Shaw, K., et al. (2023/2024). VideoDex: Learning Dexterous Manipulation from Internet Videos. CoRL 2023 / IJRR 2024. scholar

- Ghunaim, Y., et al. (2025). Human2Bot: Zero-Shot Robot Learning from Human Videos. Autonomous Robots. scholar

- Chi, C., et al. (2024). Universal Manipulation Interface: In-The-Wild Robot Teaching Without In-The-Wild Robots. RSS 2024. #35 scholar

- Sunday, E. (2025). ACT-1: Humanoid Hand for Human-Level Manipulation. Physical Intelligence Blog. #29 scholar

- Habilis Team (2026). Habilis-β: On-Device VLA for Sustained Autonomous Operation. arXiv. #33 scholar