Chapter 6: Bridging the Embodiment Gap — Retargeting and Alignment

Summary

The human-robot embodiment gap decomposes into three dimensions: kinematic, visual, and tactile. Visual gap solutions are most mature (Mirage, H2R, Masquerade), kinematic gap shows promising results via residual RL (DexH2R +40%), and the tactile gap remains nearly unexplored with OSMO's shared platform as the sole attempt. "Tactile residual learning" has never been tried — this is TacPlay [#27]'s core novelty space.

6.1 Introduction

The most fundamental barrier in converting human hand data (Data B) into robot policies is the embodiment gap — the physical differences between human and robot. Humans have 5 fingers with 20+ DoF each, while robot hands have 3–5 fingers with 3–4 DoF each. Appearance differs, sensor distributions differ, and dynamics differ.

Decomposing this gap into three dimensions reveals the maturity and unresolved degree of each.

6.2 Kinematic Gap: Differences in Joint Structure and Range

DexH2R: Residual RL (2024)

DexH2R[1] resolves the kinematic gap through task-oriented residual RL. It learns a residual policy via RL on top of retargeted human hand motions (primitive actions) to correct retargeting errors.

| Metric | Value |

|---|---|

| Grasping success rate | 70.9% |

| Whole trajectory completion | 52.7% |

| vs retargeting-only | +~40% |

Key result: retargeting alone plateaus at ~30%, but adding residual RL raises it to 70.9%. The +40%p gap is what the residual policy corrects.

TacPlay connection: TacPlay's residual policy structure \pi_{robot} = \pi_{human} + \Delta_{residual} directly inherits DexH2R's approach. The difference is that DexH2R uses visual task-oriented reward, while TacPlay uses tactile-based reward.

Limitations: simulation only, task-specific reward requires manual design, cross-task generalization unvalidated.

ManipTrans: Bimanual Residual Learning (CVPR 2025)

ManipTrans [2] achieved bimanual dexterous manipulation transfer via generalist trajectory imitator pretraining + specialist residual module fine-tuning. Using DexManipNet (3.3K episodes, 1.34M frames), it reported SOTA success rate, fidelity, and efficiency.

Together with DexH2R, it supports the validity of residual learning, but remains simulation-only and uses no tactile information.

Park et al.: Learning to Transfer Human Hand Skills (CMU/SNU, 2025)

Park et al.[2] learned a joint motion manifold mapping human hand movements, robot hand actions, and object movements in 3D for retargeting. By generating pseudo-supervision triplets from human mocap and robot teleoperation data, their learning-based retargeting method achieved an overall success rate of 0.59 vs fingertip matching baseline of 0.39. This is a CMU/SNU (Hanbyul Joo lab) collaboration.

ACT-1's Embodiment Alignment: Hardware Co-Design (Sunday Robotics, 2025)

ACT-1 [9] [#29] presents the most extreme kinematic gap resolution strategy: co-designing the robot hand and human glove with exactly the same geometry and sensor layout, thereby eliminating the need for kinematic retargeting entirely. Skill Transform aligns the remaining kinematic and visual gaps to achieve 90% transfer success rate. This approach is qualitatively different from software-based approaches like DexH2R or ManipTrans in that it removes the need for kinematic retargeting at the hardware level.

However, the Skill Capture Glove is gripper-form (2-DoF) and does not include tactile sensors, leaving its effectiveness unvalidated for tasks requiring dexterous hands (20+ DoF) and distributed tactile sensing. Additionally, co-design cannot be applied to existing robot hardware — each new robot hand requires a redesigned glove.

6.3 Visual Gap: Appearance Differences Between Human and Robot Hands

Visual gap solutions are the most mature of the three dimensions.

Mirage: Cross-Painting (UC Berkeley, RSS 2024)

Mirage [4] resolves the visual gap at test time by inpainting the target robot as the source robot, achieving zero-shot transfer across 3 robots × 4 tasks with minimal performance degradation. Key ablation: removing cross-painting causes sharp performance drop → visual gap is a primary bottleneck.

Limitations: gripper-only, dexterous hand unvalidated, real-time processing cost.

H2R: Human→Robot Video Augmentation (2025)

H2R[5] extracts 3D hand keypoints from human videos, synthesizes robot motions in simulation, and composites them into egocentric videos. From Ego4D/SSv2, it generated 1M-scale datasets achieving +5.0–10.2% in simulation and +6.7–23.3% in real-world across UR5+Gripper, UR5+LEAP Hand, and Franka.

Masquerade: Robotized Demonstrations (2025)

Masquerade [6] transforms in-the-wild human videos into "robotized" demonstrations. It inpaints out human arms, overlays bimanual robots, pretrains visual encoders on 675K edited frames, then fine-tunes diffusion policies with 50 robot demos/task.

| Comparison | Result |

|---|---|

| vs baselines | 5–6× outperforms |

| Scaling | Logarithmic (with edited data volume) |

Ablation confirmed both robot overlay and co-training are indispensable. The "log scaling with edited data" is reference-worthy for TacTeleOp [#26]'s data volume effect prediction.

UMI: Physical Equivalence (Chi et al., RSS 2024)

UMI [10] [#35] resolves the visual gap through hardware rather than software. The human demonstrates using the same handheld gripper ($371) as the robot, so the robot end-effector appears directly in the demonstration footage, eliminating appearance differences at their source. A 155-degree GoPro fisheye camera and relative trajectory action representation enable cross-robot transfer.

If Mirage/H2R/Masquerade represent "human → robot appearance transformation," UMI is the physical solution of "same appearance from the start." It reported 90% success rate on zero-shot transfer to Franka FR2.

Limitations: As a gripper-only interface, it cannot be applied to dexterous hands, and since the human demonstrates by holding a gripper, finger-level manipulation data cannot be collected. Similar to ACT-1's hardware equivalence strategy, but UMI focuses on the visual dimension while ACT-1 targets the kinematic dimension.

6.4 Tactile Gap: Differences in Sensory Density and Distribution

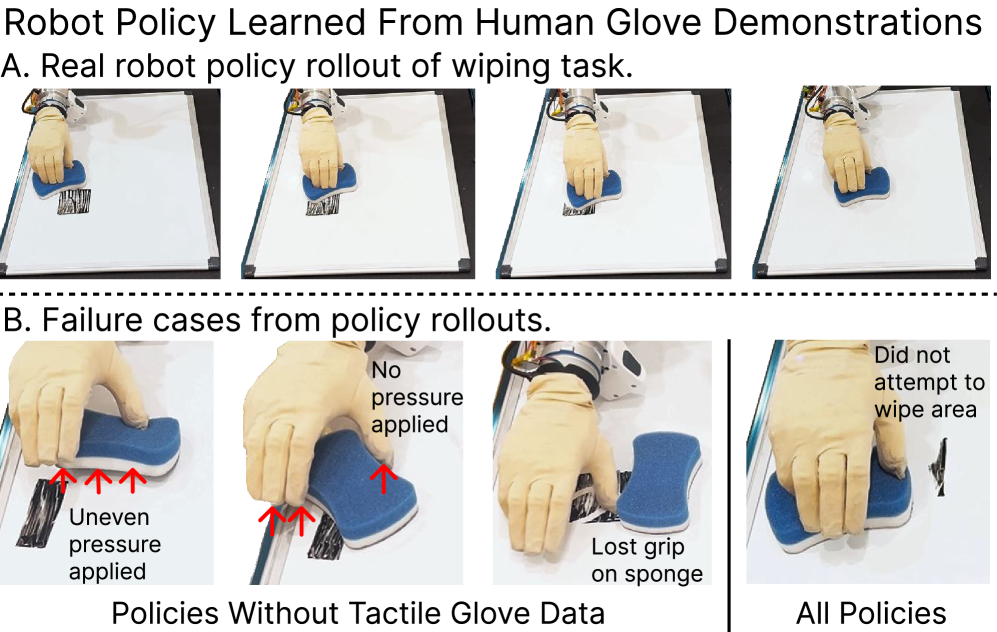

The tactile gap is the least resolved of the three dimensions, making it TacPlay's core opportunity.

Status: OSMO Is the Only Attempt

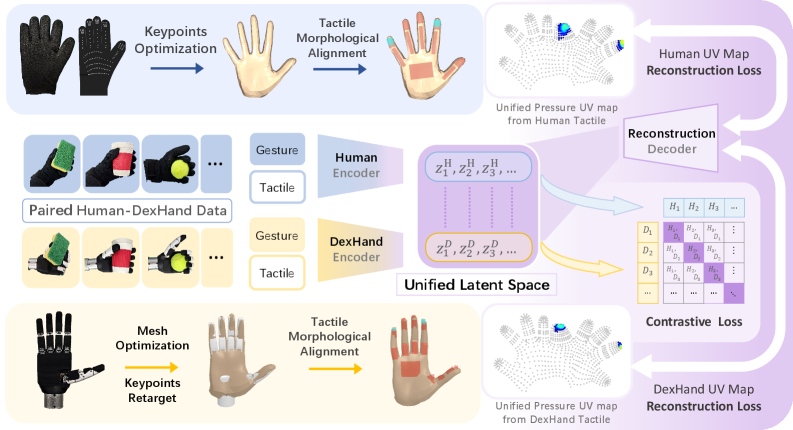

Human skin contains approximately 17,000 mechanoreceptors in the hand, while current tactile gloves carry 12–548 sensors. This density mismatch can be partially addressed through UV map normalization (UniTacHand [#16]) or shared platforms (OSMO [#18]).

A more fundamental problem is that the same manipulation action produces different contact patterns due to kinematic differences. A human grasping a cup with 5 fingers generates a fundamentally different tactile pattern than a 4-fingered robot (LEAP Hand) grasping the same cup — contact area, force distribution, and per-finger roles all differ.

OSMO [7] physically bypasses this by placing the same glove on both human and robot. Since data originates from the same sensor, sensor specificity is eliminated. However, this is passive alignment — it places data in the same space but does not correct pattern differences arising from kinematic differences.

EquiTac: Tactile Equivariant Representations (2025)

EquiTac[11] [#37] presents a structural approach to the tactile gap. It exploits the fact that GelSight sensor surface normal maps exhibit SO(2)-equivariant symmetry under object rotation, processing tactile data through a C₈-equivariant CNN. This enables zero-shot generalization to unseen orientations (angle estimation error 2.9 degrees), achieving 90% success rate with only 10 demonstrations.

Although currently limited to yaw correction on 2-DoF grippers, the concept of cross-orientation transfer leveraging the geometric structure of tactile data presents principles extensible to dexterous hand tactile gaps. If OSMO is "physical alignment" through sensor hardware unification, EquiTac is "structural alignment" exploiting geometric symmetries in the data. However, since it addresses orientation generalization within a single robot rather than cross-embodiment transfer (human → robot), it provides principled insights rather than a direct solution for tactile embodiment gap resolution.

Tactile Residual Learning: An Untouched Domain

Just as DexH2R learned kinematic residuals via residual RL to achieve +40%, tactile residuals are also learnable. Systematic biases in tactile patterns arising from human-robot kinematic differences may approximate physical constants independent of object or task — because the same robot is always kinematically different in the same way.

If this hypothesis holds, tactile residuals learned from one task can transfer to others (cross-task generalization). This contrasts with DexH2R's task-specific residuals and is TacPlay's key differentiator.

However, virtually no existing experimental evidence supports this hypothesis. Whether DexH2R's residuals are task-specific or cross-task generalizable has not been verified. This is TacPlay's most ambitious and riskiest claim (Chapter 9).

6.5 Maturity Comparison Across Three Dimensions

| Gap Type | Solution | Representative Paper | Maturity |

|---|---|---|---|

| Visual | Cross-painting | Mirage | High |

| Visual | Robot overlay | H2R (+6.7–23.3%) | High |

| Visual | Robotized demos | Masquerade (5–6×) | High |

| Visual | Physical equivalence | UMI (90%, gripper) | High |

| Kinematic | Residual RL | DexH2R (+40%) | Medium (sim only) |

| Kinematic | Motion manifold | Park et al. (0.59) | Early |

| Kinematic | Bimanual residual | ManipTrans (SOTA) | Medium (sim only) |

| Kinematic | Hardware co-design | ACT-1 (90%, 2-DoF) | Medium (gripper only) |

| Tactile | Shared platform | OSMO (only one) | Very early |

| Tactile | Equivariant repr. | EquiTac (90%, 10 demos) | Early (single robot) |

| Tactile | Residual learning | None | Unexplored |

The message is clear: tactile embodiment gap is the emptiest space. Visual has multiple effective solutions (Mirage, H2R, Masquerade), kinematic has promising results from DexH2R and ManipTrans, but tactile has only OSMO's passive shared platform.

6.6 Key Discussion

TacTeleOp's Visual Gap Strategy

TacTeleOp can leverage existing mature solutions for visual gap resolution. Applying Mirage's cross-painting or H2R's robot overlay to TacTeleOp's visual data processing treats the visual gap as an already-solved problem. TacTeleOp's contribution should focus not on visual gap but on tactile gap and tactile co-training.

TacPlay's Tactile Gap Strategy

TacPlay extends OSMO's passive Embodiment Bridge to active learning:

- Phase 1: Extract human tactile patterns as "tactile recipes" (= OSMO's data collection)

- Phase 2: Mount the same glove on the robot and run RL with human tactile patterns as "targets" (= extending DexH2R's residual RL to tactile)

- Phase 3: Deploy with learned tactile residuals (= DexH2R's \pi_{human} + \Delta_{residual})

This pipeline is a novel combination of OSMO (shared platform) + DexH2R (residual RL). Each component exists, but this combination has never been attempted (Chapter 9).

Implications from Masquerade

Two findings from Masquerade [6] have significant implications for TacTeleOp:

- Log scaling: Edited human data volume and performance show logarithmic relationship. If the same pattern holds for tactile, the first few hundred hours of data will yield the largest effect.

- Co-training indispensable: Pure human data (robotized) is insufficient; co-training with robot data is essential. This supports TacTeleOp's Data A + Data B mixing strategy.

6.7 Connection to Our Direction

This chapter's analysis clarifies TacGlove/TacTeleOp/TacPlay's positioning:

- Visual gap: Already solved. Leverage existing solutions (Mirage, H2R).

- Kinematic gap: Residual RL is promising. Use existing retargeting in TacTeleOp Stage 2.

- Tactile gap: The emptiest space. TacPlay pioneers tactile residual learning.

Synthesizing Part II: Data B alone achieves ~70% but hits contact-rich limits (Chapter 4), co-training reaches 95% but excludes tactile (Chapter 5), and the tactile dimension of embodiment gap is nearly unresolved (Chapter 6). This motivates TacGlove (Chapter 7), TacTeleOp (Chapter 8), and TacPlay (Chapter 9) proposed in Part III.

References

- DexH2R (2024). Task-Oriented Residual RL for Dexterous Manipulation Transfer. arXiv. scholar

- Li, et al. (2025). ManipTrans: Efficient Bimanual Dexterous Manipulation Transfer via Residual Learning. CVPR 2025. scholar

- Park, S., et al. (2025). Learning to Transfer Human Hand Skills for Robot Manipulations. arXiv:2501.04169. scholar

- Chen, L. Y., et al. (2024). Mirage: Cross-Embodiment Zero-Shot Transfer via Cross-Painting. RSS 2024. scholar

- H2R (2025). Human-to-Robot Video Augmentation for Pretraining. arXiv. scholar

- Lepert, et al. (2025). Masquerade: Scaling In-the-Wild Human Video to Bimanual Robot Policy Learning. arXiv. scholar

- Yin, J., et al. (2025). OSMO: A Large-Scale Tactile Glove. arXiv. https://arxiv.org/abs/2512.08920 #18 scholar

- Liu, V., et al. (2025). EgoZero: Smart Glasses to Robot Policy. arXiv. scholar

- Sunday Robotics (2025). ACT-1: Robot Foundation Model with Skill Transform. #29 scholar

- Chi, C., et al. (2024). UMI: Universal Manipulation Interface. RSS 2024. #35 scholar

- EquiTac (2025). Tactile Equivariance for Cross-Orientation Transfer. arXiv. #37 scholar