Chapter 2: Collecting Human Hand Data — From Sensors to Datasets

Summary

Hardware for collecting human hand data falls into four categories: motion tracking gloves, tactile gloves, wearable exoskeletons, and egocentric cameras. Each approach carries distinct trade-offs, and TacGlove's proposed "stretchable glove + tactile sensors + smart glasses" combination derives its design rationale from this analysis.

2.1 Introduction

Having established the value of Data B in Chapter 1, this chapter asks "with what, and how, do we collect it?" Between 2024 and 2026, diverse wearable data collection systems emerged, differing substantially in the modalities they capture (joint angles, forces, vision) and their operational conditions (cost, wearability, durability).

2.2 Motion Tracking Gloves

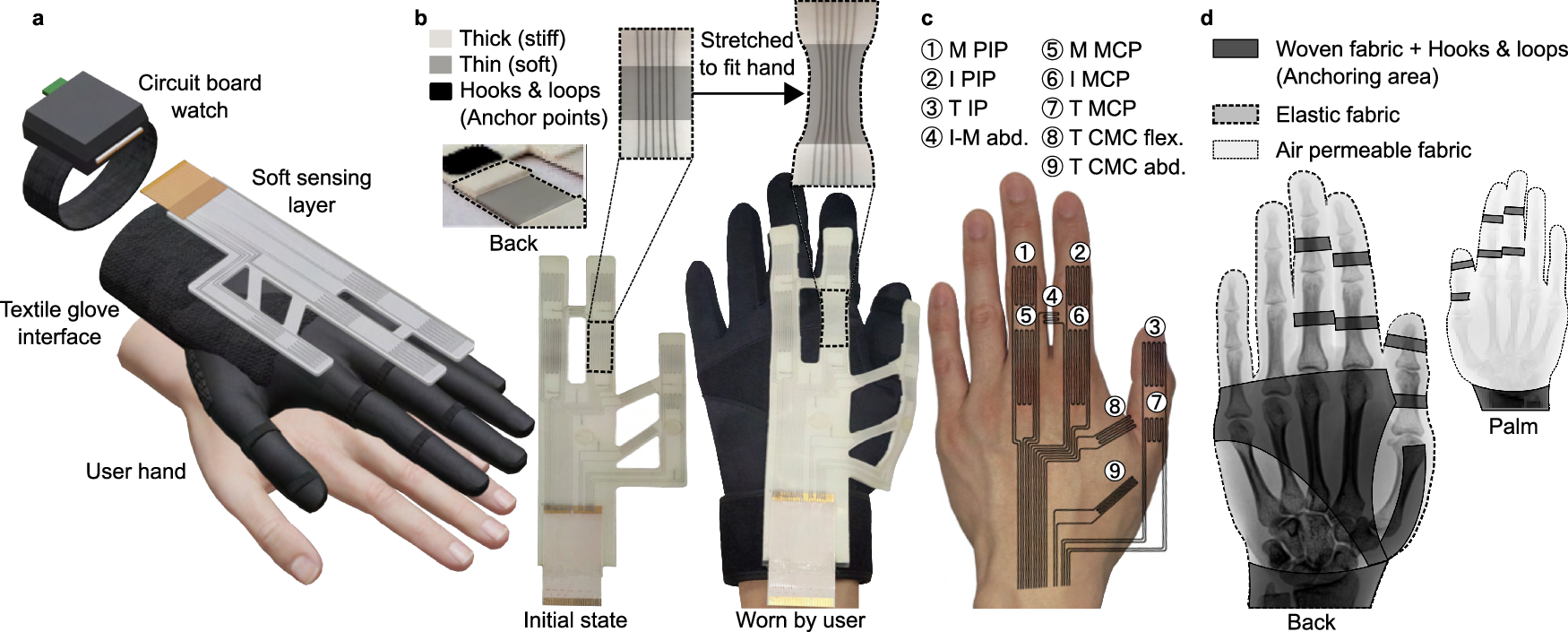

Park et al. (2024) — Stretchable eGaIn Glove

Park et al.[1] [#6] proposed a stretchable glove based on eGaIn (eutectic gallium-indium) liquid metal sensors. Nine eGaIn strain sensors placed over finger joints simultaneously estimate joint angles and bone lengths.

| Metric | Value |

|---|---|

| Bone length error | 2.1 mm |

| Joint angle error | 4.16° |

| Fingertip position error | 4.02 mm |

| Sensor count | 9 eGaIn |

| Coverage | 3 fingers (thumb, index, middle) |

The key advantage is its stretchable nature. Being silicone-based, it does not impede natural grasping and adapts to varying hand sizes. It structurally avoids the deformation and wear issues of 3D-printed exoskeletons (a limitation acknowledged by DexUMI's authors).

Two critical limitations exist: (1) only 3 fingers covered — ring and pinky are absent, precluding whole-hand manipulation data. (2) No tactile sensors — it measures joint angles only, not contact forces.

TacGlove [#26] proposes extending this glove to 5 fingers and adding eight 3-axis magnetic tactile sensors (Chapter 7).

Other Motion Gloves: Manus, StretchSense

Manus Quantum and StretchSense are commercial motion capture gloves providing 20+ DoF joint angles. Designed primarily as teleoperation interfaces (Data A collection), they require additional engineering for robot-free Data B collection.

2.3 Tactile Gloves

Tactile gloves capture not only joint angles but also contact forces. The value of this additional modality is detailed in Chapter 3; here we focus on hardware comparison.

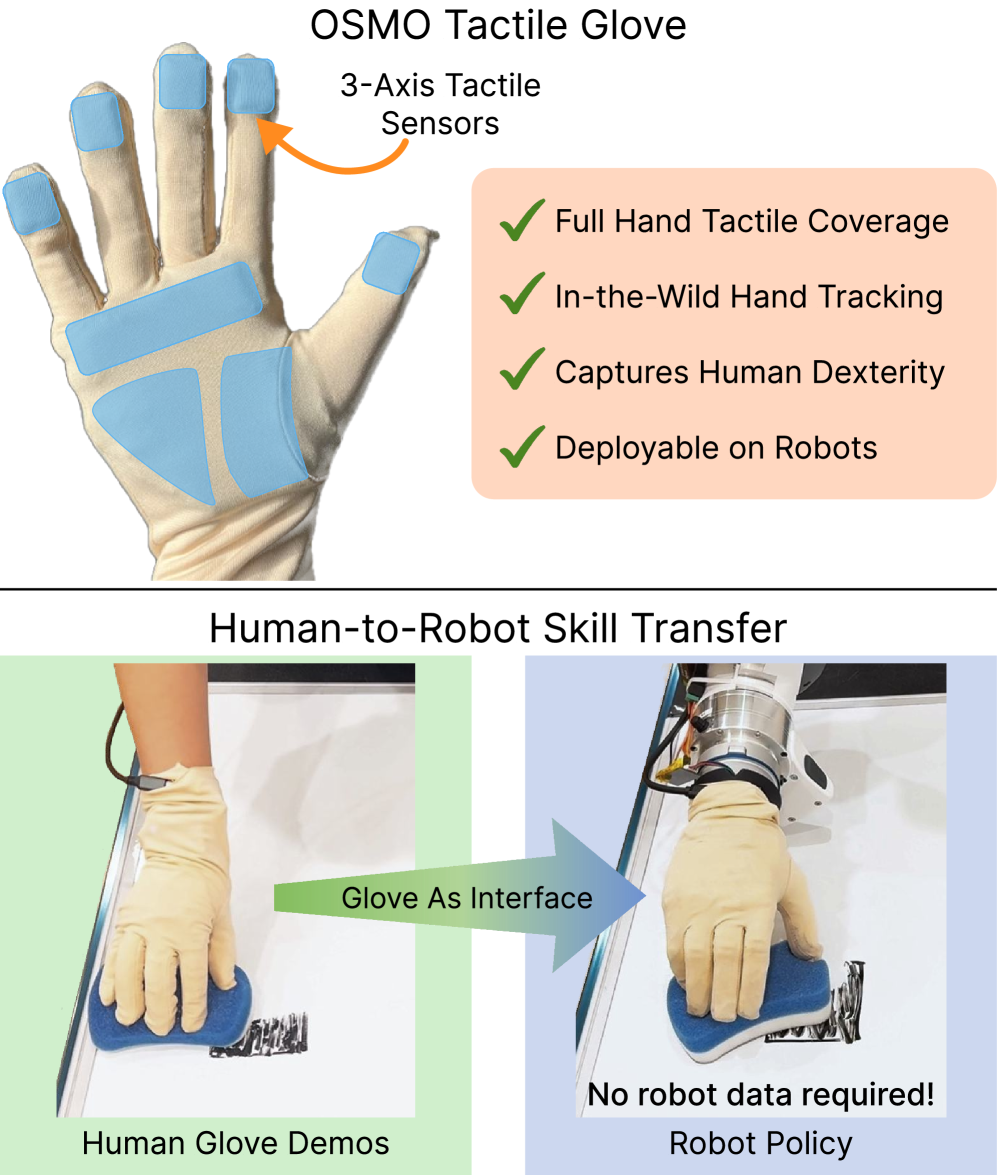

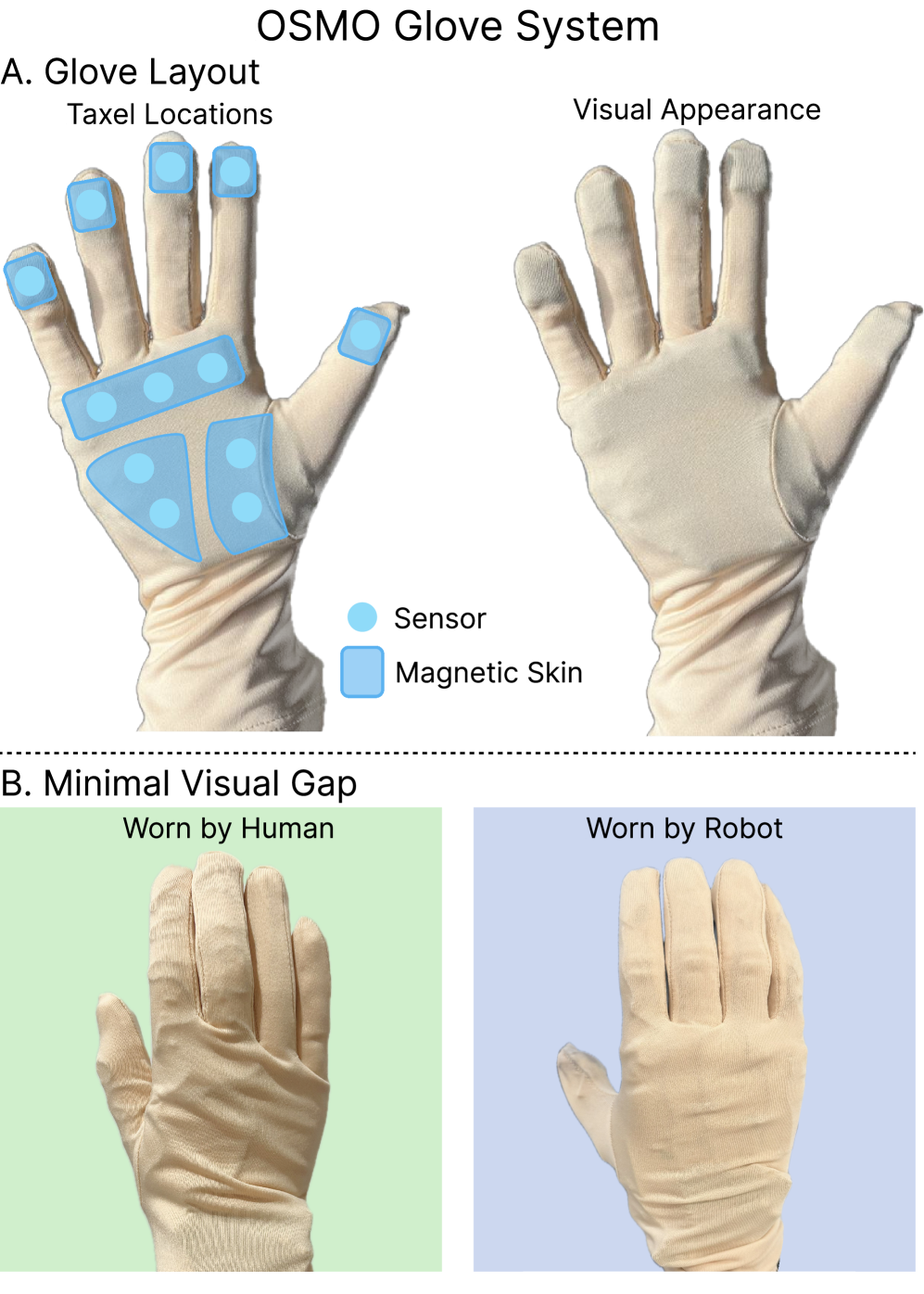

OSMO (Meta FAIR, 2025)

OSMO [2] [#18] is currently the most complete tactile glove system. Twelve 3-axis magnetic tactile sensors (taxels) are distributed across 5 fingers and 3 palm sections, measuring deformation of magnetic elastomers via BMM350 magnetometers.

| Feature | OSMO |

|---|---|

| Sensor type | 3-axis magnetic (magnetometer + magnetic elastomer) |

| Sensor count | 12 |

| Range | 0.3 – 80 N |

| Noise reduction | MuMetal shielding + dual magnetometer differential sensing (57% reduction) |

| Compatibility | Aria Gen 2, Quest 3, Apple Vision Pro, Manus Quantum, etc. |

| Human/robot shared | Mountable on Psyonic Ability Hand |

OSMO's most important contribution is the Embodiment Bridge concept. By physically placing the same glove on both human and robot, the visual and tactile embodiment gap is physically resolved. This concept is also a core premise of TacGlove/TacTeleOp/TacPlay [#27].

However, OSMO's experimental validation is limited: 1 task (wiping), 140 demos (~2 hours), single robot (Psyonic Ability Hand). The authors themselves acknowledged "unimanual task with fairly limited dexterity." Large-scale multi-task validation and co-training pipelines remain unimplemented (Chapter 7, Chapter 8 for TacGlove/TacTeleOp's differentiation).

Whole-hand Coverage: The Modality Spectrum

Two recent systems pursue the same whole-hand goal as OSMO through different modalities and densities. [16]'s Sparsh-skin instruments the Allegro hand with 368 Xela uSkin magnetic taxels (three 4×6 palm pads + 4 fingertips + 11 phalanges) sampled at ~100 Hz. The key empirical message is an ablation: removing the palm pads drops pose estimation accuracy by more than 10 percentage points, quantitatively establishing that "full-hand tactile perception is crucial." On the vision-based side, [17]'s F-TAC Hand embeds 17 VBTS units covering roughly 70% of the palmar surface at 0.1 mm resolution (~10,000 taxels/cm²); in multi-object delivery trials, adaptation rates rise from 53.5% (tactile absent) to near 100% once the high-resolution palm channel is active.

These three points — OSMO (magnetic, 12 channels), Sparsh-skin (magnetic, 368 channels), F-TAC (vision, 0.1 mm) — sample the whole-hand design space along different axes of resolution, channel count, and modality. TacGlove occupies a distinct coordinate on this spectrum: a human-wearable, stretchable form factor with moderate density and a shared human/robot platform (Chapter 7).

VTDexManip (Zhejiang University, ICLR 2025)

VTDexManip [3] presented the first visual-tactile dexterous manipulation dataset. Using a low-cost piezoresistive pressure sensor glove paired with HoloLens2, it collected 5 participants, 10 tasks, 182 objects, 2,032 sequences (565K frames).

The key result: even binary tactile information yielded +20% improvement on RL benchmarks. Joint visual-tactile pretraining provided an additional +20%. These results are simulation-based; real-world validation was not performed.

TacCap (2025)

TacCap [4] uses FBG (Fiber Bragg Grating) optical sensors in a thimble form factor. It offers 10⁻⁵ strain resolution at up to 2 kHz sampling with EMI immunity, but the interrogator equipment is expensive and coverage is limited to fingertips.

DOGlove (Tsinghua, RSS 2025)

DOGlove [5] implements 21-DoF motion capture + 5-DoF cable-driven force feedback + 5-DoF LRA haptics for under $600. It reported 85% on Press and Move Box and 70% on Pick and Place tasks. However, DOGlove is a teleoperation tool, not a data collection + learning system — no experiments used its tactile data for policy learning.

Tactile Glove Comparison

| System | Sensor Type | Count | Axes | Human/Robot Shared | Cost | Learning Validation |

|---|---|---|---|---|---|---|

| OSMO | Magnetic | 12 | 3-axis | Yes | Undisclosed | 72% (1 task) |

| VTDexManip | Piezoresistive | Many | Binary | No | Low | +20% (sim) |

| TacCap | FBG Optical | Many | Multi | Yes | High | None |

| DOGlove | Cable-driven | 5+5 | - | No (teleop) | <$600 | 85%/70% (teleop) |

| UMI-FT | CoinFT (capacitive 6-axis F/T) | 2 (1 per finger) | 6-axis | Yes (gripper) | Low | Yes (ACP) |

2.4 Wearable Exoskeletons

Exoskeletons mechanically capture the kinematics of the human hand. The key difference from gloves is that joint angles are directly measured via encoders.

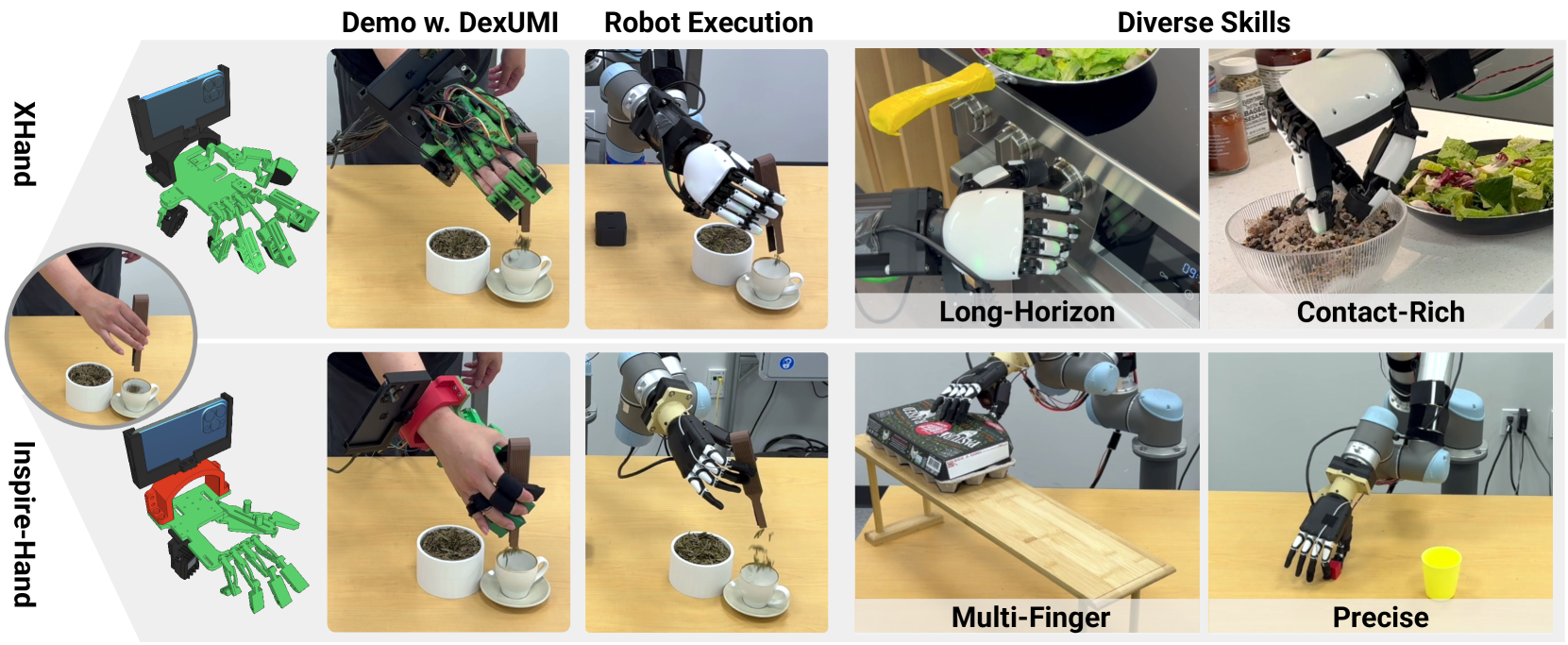

DexUMI (Stanford, 2025)

DexUMI [6] [#8] uses a 3D-printed exoskeleton + encoder combination for dexterous manipulation data collection. It reported 86% average success rate and 3.2× faster collection than teleoperation. Critically, its ablation showed that policies with tactile sensors succeeded at grasping, while those without failed.

The limitation is deformation of 3D-printed components. The authors identified exoskeleton deformation as a source of encoder inaccuracy. Additionally, robot hand inpainting is only possible offline, limiting real-time demonstrations.

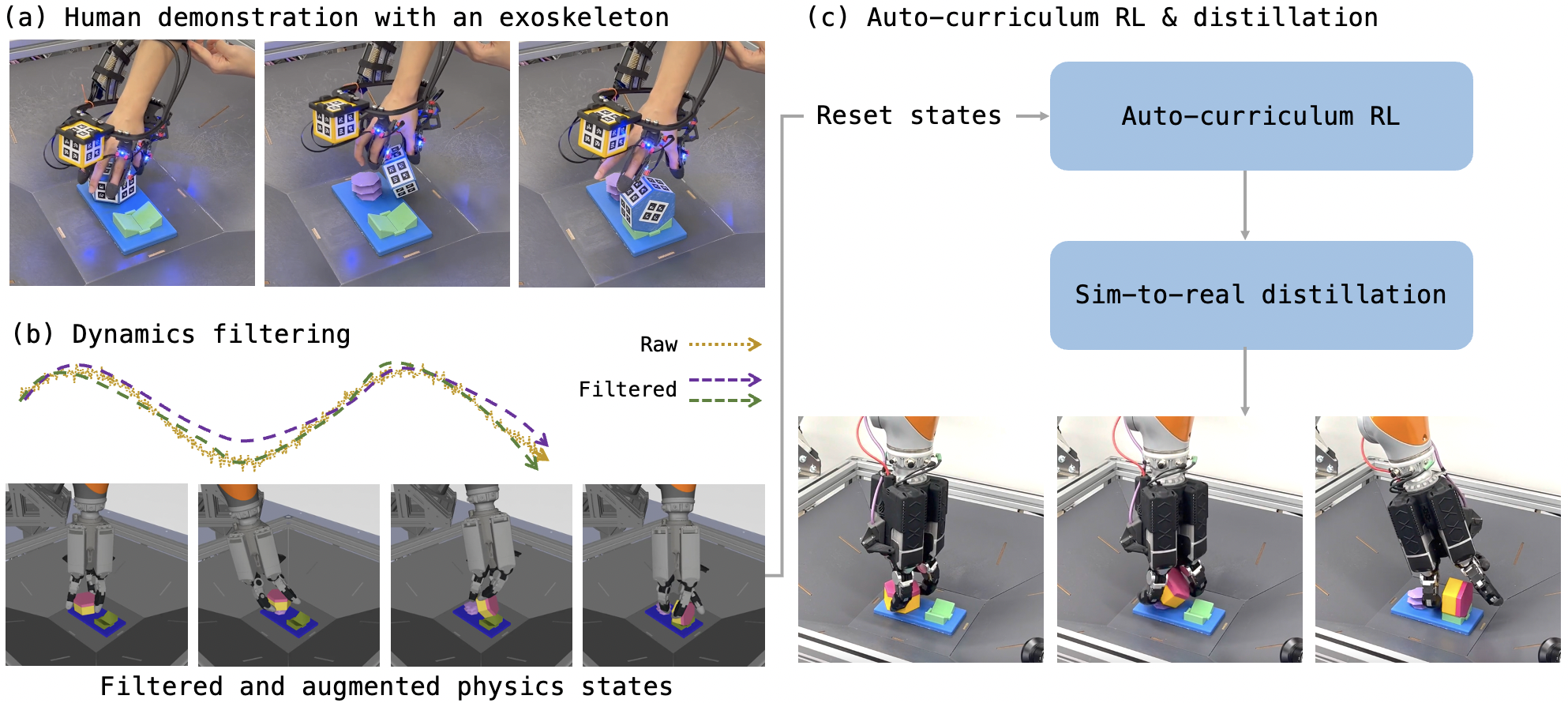

ExoStart (Google DeepMind, 2025)

ExoStart [7] [#9] collects only 9–15 demonstrations from a low-cost exoskeleton, then trains policies via RL in simulation. It achieved >50% success on tasks like AirPods case opening and key insertion, reporting 8× faster collection than teleoperation. Dynamics filtering and auto-curriculum RL are the key techniques.

UMI — Gripper-Mounted Interface (Stanford, 2024)

UMI [14] [#35] takes a unique approach distinct from exoskeletons or gloves: a handheld gripper. The human directly holds and operates a $371, 2-DoF gripper identical to the robot's, while a GoPro fisheye camera and IMU-based SLAM track the 6-DoF trajectory. Through relative trajectory representation, policies transfer across collection environments and robots — demonstrated with cross-robot transfer between UR5e and Franka.

Two key limitations exist: (1) only 2-DoF gripper support — dexterous hand manipulation is impossible, limited to simple pick-and-place level tasks. (2) No tactile sensors — the system learns pure vision policies without contact force information. The follow-up work UMI-FT added 6-axis F/T via CoinFT sensors, but at low (wrist-level) resolution only.

AirExo / AirExo-2 (SJTU, 2024/2025)

AirExo [8] and AirExo-2 [9] are ultra-low-cost passive exoskeletons at $300/arm. AirExo-2 achieved teleoperation-level performance using only in-the-wild data. "3 min teleop + in-wild ≥ 20 min teleop only" clearly demonstrates the power of combining small amounts of robot data with large volumes of human data. However, it is limited to arm-level gripper tasks without dexterous hand support.

Wearable Comparison

| System | Cost | Type | Tactile | Robot-free | Dexterous |

|---|---|---|---|---|---|

| DexUMI | Undisclosed | Exo | Partial | Yes | Yes |

| ExoStart | Low | Exo | No | Yes | Yes |

| AirExo-2 | $0.6K | Passive exo | No | Yes | No (arm) |

| HumanoidExo | - | Exo | No | No | No (arm) |

| NuExo | High | Active exo | Partial | No | No (arm) |

2.5 Egocentric Video Datasets

These approaches collect human hand data using cameras alone, without gloves or exoskeletons.

EgoDex (Apple, 2025)

EgoDex [10] is the largest existing dexterous manipulation dataset, leveraging Apple Vision Pro's ARKit: 829 hours, 90M frames, 338,000 episodes, 194 tasks with per-finger 30 Hz 3D pose tracking. It is limited to lab/home environments, and tactile and force information are entirely absent.

Ego4D (Meta, 2022)

Ego4D [11] comprises 3,670 hours of daily-life video from 931 participants across 9 countries. It serves as a key pretraining source for subsequent work (PhysBrain, EgoScale). Its limitation is that manipulation-relevant content constitutes a small fraction of the general daily-life footage.

BuildAI Egocentric-10K (2025)

BuildAI[12] is the largest egocentric dataset collected in actual factories: 10,000 hours, 10.8 billion frames, 2,153 workers, Apache 2.0 license. PhysBrain[13] converted it to VQA format achieving 53.9% on SimplerEnv. However, without tactile data or per-finger hand tracking, it has limited utility for precise manipulation learning.

Dataset Comparison

| Dataset | Scale | Environment | Hand Tracking | Tactile | Industrial |

|---|---|---|---|---|---|

| EgoDex | 829 hr | Lab/home | Per-finger 3D | No | No |

| Ego4D | 3,670 hr | Diverse daily | Limited | No | No |

| BuildAI | 10,000 hr | Factory | No | No | Yes |

2.6 Key Discussion: What Is Missing

The above analysis reveals systematic gaps:

- Absence of tactile data: Across all 16 data collection papers surveyed, only OSMO (1 task) and VTDexManip (simulation) used tactile data for learning. No large-scale tactile data collection → robot transfer pipeline exists.

- Absence of integration: No system simultaneously collects joint angles (glove), tactile forces (tactile sensors), and vision (smart glasses). Existing work uses each modality independently.

- Absence of industrial settings: Except for BuildAI, all datasets were collected in lab/home environments. Even BuildAI lacks tactile data and hand tracking.

UMI successfully demonstrated robot-free data collection, but it lacks both tactile sensing and dexterous hand support — precisely the gaps TacGlove addresses.

TacGlove (Chapter 7) aims to address the hardware gap with a single system: extending Park et al.'s [2024] stretchable glove to 5 fingers, adding 8 OSMO-style 3-axis magnetic tactile sensors, and synchronizing with smart glasses for industrial deployment. TacTeleOp (Chapter 8) then builds the co-training pipeline to convert this data into robot policies.

2.7 Connection to Our Direction

TacGlove's hardware design is positioned as follows within the above comparison:

- Park et al.'s stretchable property → natural grasp preservation, factory durability (avoiding DexUMI's 3D-printed deformation issues)

- OSMO's 3-axis magnetic sensors → human-robot shared Embodiment Bridge (shared tactile space)

- AoE's always-on paradigm → workers wear continuously for natural data collection

- Modality richness vs BuildAI → smaller scale (800 hr vs 10,000 hr) but differentiated through tactile + per-finger tracking

The next chapter analyzes quantitatively why this tactile modality is essential (Chapter 3).

References

- Park, M., & Park, Y.-L. et al. (2024). Stretchable Glove for Hand Motion Estimation. Nature Communications. https://www.nature.com/articles/s41467-024-50101-w #6 scholar

- Yin, J., et al. (2025). OSMO: A Large-Scale Tactile Glove for Human-to-Robot Manipulation Transfer. arXiv. https://arxiv.org/abs/2512.08920 #18 scholar

- Liu, Q., et al. (2025). VTDexManip: Visual-Tactile Dexterous Manipulation Dataset. ICLR 2025. scholar

- Ren, T.-A., et al. (2025). TacCap: FBG-Based Optical Tactile Thimble. arXiv. scholar

- Zhang, H., et al. (2025). DOGlove: Open-Source Haptic Feedback Glove. RSS 2025. scholar

- Xu, M., et al. (2025). DexUMI: Universal Manipulation Interface for Dexterous Hands. arXiv. #8 scholar

- Si, Z., et al. (2025). ExoStart: Exoskeleton-Aided Dexterous Manipulation from One Demo. arXiv. #9 scholar

- SJTU (2024). AirExo: Low-Cost Exoskeletons for Learning Whole-Arm Manipulation in the Wild. ICRA 2024. scholar

- SJTU (2025). AirExo-2: In-the-Wild Data Collection for Robot Learning. CoRL 2025 Oral. scholar

- Hoque, R., et al. (2025). EgoDex: Egocentric Dexterous Manipulation Dataset. arXiv. scholar

- Grauman, K., et al. (2022). Ego4D: Around the World in 3,000 Hours of Egocentric Video. CVPR 2022. scholar

- BuildAI (2025). Egocentric-10K: Factory Egocentric Video Dataset. Hugging Face. scholar

- PhysBrain (2025). Egocentric2Embodiment Pipeline. arXiv. scholar

- Chi, C., et al. (2024). Universal Manipulation Interface: In-The-Wild Robot Teaching Without In-The-Wild Robots. RSS 2024. https://umi-gripper.github.io/ #35 scholar

- Chi, C., et al. (2025). UMI on Legs: Making Manipulation Policies Mobile with Manipulation-Centric Whole-body Controllers. arXiv. scholar

- Sharma, A., et al. (2025). Self-supervised perception for tactile skin covered dexterous hands (Sparsh-skin). arXiv. https://arxiv.org/abs/2505.11420 scholar

- Zhao, Z., et al. (2025). Embedding high-resolution touch across robotic hands enables adaptive human-like grasping (F-TAC Hand). Nature Machine Intelligence. https://arxiv.org/abs/2412.14482 #39 scholar